Lei, T., et al.: Virtual-to-real deep reinforcement learning: continuous control of mobile robots for mapless navigation. Computer Vision and Pattern Recognition (2019). Hu, H.-N., et al.: Joint Monocular 3D Vehicle Detection and Tracking.

In: CoRL - 2022 Conference on Robot Learning, Dec 14–18, 2022 – Auckland, NZ. arXiv:1807.00275 (2018)įeng, Z., et al.: Advancing self-supervised monocular depth learning with sparse LiDAR. Computer Vision and Pattern Recognition (cs.CV) Artificial Intelligence (cs.AI) Machine Learning (cs.LG) Robotics (cs.RO). Ma, F., et al.: Self-supervised Sparse-to-Dense: Self-supervised Depth Completion from LiDAR and Monocular Camera. In: 9th International Conference on Control, Automation, Robotics and Vision, ICARCV 2006, pp. Nishimura, S., Itou, K., Kikuchi, T., Takemura, H., Mizoguchi, H.: A study of robotizing daily items for an autonomous carrying system-development of person following shopping cart robot. The experimental findings demonstrate the method's precision and effectiveness including sensors, algorithms, and mapping technologies that enable the robot to identify obstacles and navigate around the AGV. Even with a minimal configuration, the algorithm is appropriate for the automaton. It can function autonomously or manually via the local network. Using its built-in distance tracking algorithm, the robot can also detect and adjust its speed to safely follow the individual in front at an appropriate distance. A customized development of the TensorFlowLite ESP32 module from the TensorFlow CoCo SSD model enables the ESP32-CAM camera module on the robot to self-identify objects and autonomously follow the human object in front.

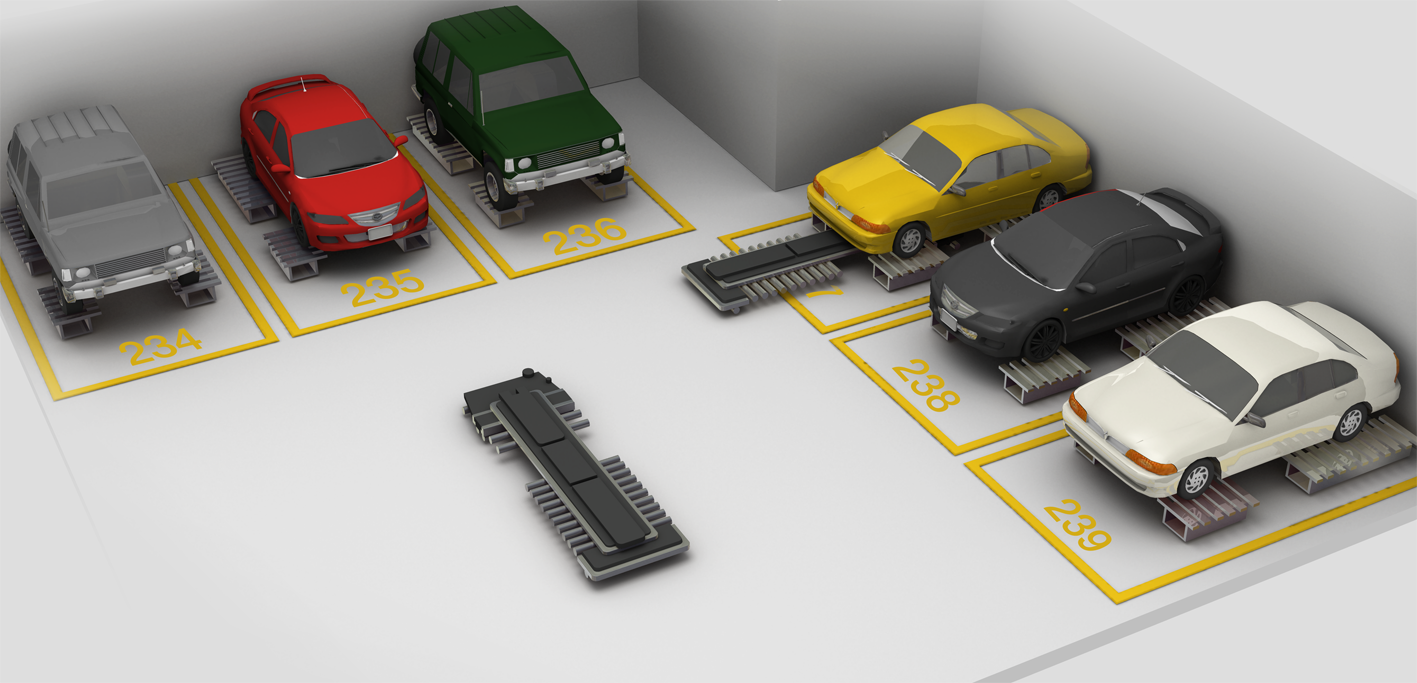

Using a model of a four-wheeled self-propelled robot vehicle, a highly adaptable and modifiable platform AGV was built. This is repeated for each drop off station.This paper proposes a solution for the AGV (Autonomous Guided Vehicles) robot to effectively monitor a moving object using deep learning by enabling the robot to learn and recognize movement patterns. Once the queue to pickup all items is exhausted, the Host Server will command the AGV to the first drop-off location, and command the Robot to pick up each item that is to be dropped off at that station and place them in the receptacle. This process is repeated for each station. The location of each item will be recorded for use when dropping off the items. Once the AGV is in position, the Host Server will command the Robot to pick up as many items at the station as are in the queue and place each on the AGV fixture. The Host Server will command the AGV to move to a station. Once this is complete the AGV will await instructions from the Host Server. The Host Server will then command the AGV to move to the first drop off station in the queue and command the Robot to deliver all items that are to end up at that station. This will repeat until the queue is empty. The Host Server will command the Robot to pick up items and will signal to the AGV to move to the next station in the queue.

Once a certain number of items have been selected or some timeout is exceeded, the queue will be executed and the Host Server will set a digital output, commanding the AGV to move to the first station in the queue. On item selection it will create a queue of stations to move to. The Host Server awaits input from the tablet HMIs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed